9,000 words (including acknowledgments)

Header image taken from here.

Sign up for the Blank Horizons mailing list here.

Nature is an interrogator, and consciousness is the answer.

Editor’s Note

The Qualia Research Institute has recently made me aware that there are considerably more overlaps between the content of this essay and ideas previously put forward by the institute than I initially realized. I have since revised the article so that it more fully attributes QRI’s research. In particular, I have created a special “Acknowledgments” footnote (denoted ‘A’ followed by the number of the footnote, e.g. A3) that cites similarities with QRI’s work.

Since interning at QRI last summer, my thinking on consciousness has been influenced strongly by its “memeplex,” the set of ideas and theories that define QRI as a research institute. I wouldn’t have conceived of the argument put forward in this article if I hadn’t been immersed in the rich and complex intersection of neuroscience, philosophy, and physics at QRI. I am deeply indebted to QRI for inspiring me to think more deeply and broadly about consciousness.

tl;dr (Summary)

In this essay, I propose a “full-stack” explanation of consciousness. (A1) I begin by discussing my views on the metaphysics of consciousness, in particular addressing the relationship between consciousness, math, and the physical world. Based on this philosophy, I then put forward a definition of consciousness centered around an information-theoretic measure, mutual information. I also present solutions to two significant yet unresolved questions about consciousness, namely the Binding Problem and the Palette Problem (A2), from both “algorithmic” and “implementational” levels of analysis.

Panpsychism

According to a philosophical view known as panpsychism, there is a fundamental unit of consciousness that pervades the universe. I’ve briefly discussed panpsychism elsewhere on this blog, and while I have adopted it as my stance on the metaphysics of consciousness, I have yet to provide a justification for it. The reasoning that follows is by no means airtight, and is meant to be viewed more as an outline of an argument than an argument itself:

Back in the summer of 2018, I claimed, on the basis of the Mary the Color Scientist thought experiment and other ideas, that physical phenomena do not reduce to mental (conscious) phenomena. Rather, physical and mental phenomena may actually be reflections of each other, like two opposite sides of the same coin. At the time, I didn’t really have anything precise to say about this “non-duality” between the physical and the mental; the coin analogy was nothing more than abstract metaphor. Now, however, I will try to sharpen the comparison, first by clarifying some misconceptions about the underlying nature of the physical. In particular, I hope to demonstrate that the physical reduces to math, and math describes consciousness.

In the 1600s, Réne Descartes stated that physical things, unlike mental things, are characterized by their extension in space. That is, whereas thoughts don’t occupy space, physical matter is extended in three dimensions: length, breadth, and depth. While Descartes’ ontology accords with classical or common intuitions about reality, it is inconsistent with contemporary science. The quantum wave function, the fundamental object of quantum mechanics, cannot be observed as a classical wave that is oscillating in 3D space. While the square of the wave function can be interpreted as the probability distribution that the particle has a certain position or momentum, the wave function itself is an intrinsically mathematical object that resides in a potentially infinite-dimensional vector space called the Hilbert space. The most elementary physical phenomena, therefore, are not characterized by their extension in 3D space. Rather, from a physical perspective, they are literally constituted of math.

This claim runs counter to the tacit assumption in physics that physically real attributes of the natural world are independent of the math that we use to model their behavior. For instance, most physicists would argue that we should not conflate the physical property of mass with the number that we derive when we mathematically infer the mass of an object. Yet I believe that this line of thinking is misguided. Math is not merely a convenient tool to explain physics; rather, physics is inherently mathematical. This identity between math and physics is most clearly observed through the study of symmetry, which I have discussed previously. Symmetries underpin modern physics. They refer not just to the rotational and mirror symmetries that we are familiar with in everyday life, but more broadly to “invariances under transformations,” or aspects of a system that remain the same after a change is applied to the system. Symmetries are, in essence, mathematical objects; they are defined as elements of algebraic structures known as groups. Arguably, fundamental particles in physics are nothing more than representations of certain symmetries, which implies that the particles are literally algebraic structures. Physical properties like mass and spin are merely numbers that can be derived from a series of mathematical operations performed on the groups. (See footnote 1 for the technical details.)

Based on the premise that physics reduces to math, physicists like Max Tegmark assert that everything in the universe is math, rather than merely being described by math. (This is known as his Mathematical Universe Hypothesis.) However, Tegmark’s philosophy is misguided; math is intrinsically a descriptive enterprise. Otherwise, the universe would literally be composed of equations, vectors, numbers, and other mathematical objects, which simply wouldn’t make any sense. We can wrap our heads around the idea that a table is made of atoms, but it is nonsensical to suggest that a table is comprised of vectors. How would it be possible for vectors to actually have a mass, to attract and repel each other, to arrange themselves in a three-dimensional structure with a certain shape, and to do all the other things that physical objects do?

Fig 0. The matrix on the left encodes the arrangement of squares on the chess board (1 = black, 0 = white). But how could the matrix possibly be equivalent to the chess board? How could an element of a matrix be black or white, let alone have a mass, texture, etc.?

Even more problematically, if the universe were made of math, then there would have to be one objective mathematical language in which the universe is written. Yet there are countless ways of mathematically expressing a physical fact. In the trivial case, the symbols in an equation can be arbitrarily replaced with any other set of symbols; Newton’s Second Law, F = ma, can just as easily be written A = bc. Tegmark would refute this objection by arguing that it stems from a misunderstanding of the Mathematical Universe Hypothesis. The theory claims that the symmetry underlying the symbols is objectively real, rather than the symbols themselves. In other words, different sets of symbols all point to a single algebraic structure that explains physical reality. But this belief in the “one true mathematical structure” may be illusory, as we can see by considering the C*-algebra, which is a prime candidate for this mathematical Holy Grail. The C*-algebra was originally constructed out of an effort to unify two different mathematical expressions of quantum mechanics: one involving infinite-dimensional matrices, and the other involving differential operators. It turns out that these are indeed two equivalent representations of the same C*-algebra. However, it is possible to have two inequivalent representations of one C*-algebra that are nevertheless equally true in their descriptions of physical phenomena. In particular, different observers can have different ways of observing a phenomenon (specifically, a quantum vacuum), yet it does not appear to be the case that one is more real than the other. (2)

Stephen Wolfram generalizes this idea in his recent landmark essay on a new path towards a unifying theory of physics. In brief, Wolfram claims that graphs are the underlying mathematical structures of the physical universe. (He is not using graphs in the colloquial sense, but rather is referring to sets of nodes that are connected by edges. See Part I of this essay series for a longer discussion on graphs in relation to consciousness.) (A3) Additionally, there are computational rules that govern the evolution of graphs. (3) Wolfram makes the remarkable claim that the universe is capable of using every possible computational rule to update the graphs corresponding to the physical phenomena within it. Yet observers in the universe inevitably pick a particular reference frame in the space of all possible computational rules, which corresponds to a certain mathematical language for describing their experience of the universe. To quote Wolfram, “I’ve always assumed that any entity that exists in our universe must at least ‘experience the same physics as us.’ But now I realize that this isn’t true. There’s actually an almost infinite diversity of different ways to [mathematically] describe and experience our universe.”

So, it is the observers who determine the mathematical description that best fits their experience of reality. Math, therefore, is a tool that observers use to describe their observations of the universe; it is not equivalent to the universe itself, since there are infinitely other ways of mathematically representing the universe that are equally valid. (4)

In summary, I’ve argued thus far that physics reduces to math, but math is not equivalent to reality. Rather, math is merely a descriptive tool. What is it describing? Observations about reality. Furthermore, if we define consciousness as the act of observation, then we arrive at a new understanding of the relationship between physical and mental phenomena: the physical (i.e. math) describes the mental (i.e. consciousness), and the mental is described by the physical. (5) It is in this sense that the physical and the mental are two opposite sides of the same “reality coin.”

This conception of consciousness might seem rather spurious at first glance. How could the math of fundamental physics really be encoding consciousness? Isn’t consciousness a faculty possessed only by a vanishingly small subset of physical entities in the universe, namely human beings and perhaps a few other animals? According to popular notions of consciousness, yes. But not according to the theory of panpsychism, which, again, posits that there is a fundamental unit of consciousness everywhere in the universe. (A4) Panpsychism strikes many people as ludicrous because this purported “unit of consciousness” has yet to be rigorously defined. (6) Yet I believe that there is a way to precisely specify the nature of fundamental consciousness. As I have argued in the paragraphs above, observers define the mathematical language that best encapsulates the universe, suggesting that the notion of “observation” should amount to a mathematical formalism. (A formalism, according to the Oxford English Dictionary, is “a description of something in formal mathematical or logical terms.) Determining this formalism is the key to establishing a legitimate, working theory of panpsychism. Moreover, the fact that math, which constrains the structure of the physical world, is conditional on observation indicates that the latter is a pervasive, fundamental feature of the universe. It is worth noting that the “observation” I am referring to is of a very fundamental kind, one that is much more rudimentary than the rich, vivid, multi-sensory, emotionally charged, conscious experience that we humans have. In other words, electrons, rocks, and chairs do not have thoughts and feelings, yet they can nonetheless “observe” reality in a certain sense, which I will seek to articulate in this essay.

There are three immediate objections to panpsychism that I can think of. Firstly, consciousness seems to arise primarily from the activity of neurons in the brain, so a theory of consciousness that is rooted entirely in neuroscience would appear to be much more parsimonious and sensible than a theory that appeals to fundamental physics, which ostensibly doesn’t have anything to do with subjective experience. But such “materialist” theories of consciousness fall prey to David Chalmers’ “Hard Problem.” I think that any explanation of consciousness derived solely from principles of neuroscience will never be able to solve the Hard Problem. (See my essay “Strange Loops and Consciousness” for the full argument.)

Secondly, there are many mathematical statements that have nothing to do with observation, let alone consciousness. What could F = ma possibly say about subjective experience? Yet I contend that every mathematical law corresponding to a real physical event encodes some information about consciousness. (7) The reasoning depends on the definition of consciousness that I set forth later in this essay, so I can’t yet offer a precise justification. Suffice it to say, for now, that all mathematical statements about physics set constraints on the repertoire of observations that physical objects are capable of.

Thirdly, how can we be sure that “fundamental observations” serve as the foundations for consciousness? Are the principles that govern human consciousness really the same as those that underlie observations in physics? Yes. The information-theoretic measure of consciousness that I propose is ubiquitous throughout nature, and has been studied extensively in relation to neuroscience. Human consciousness, however, is significantly different from fundamental consciousness for two reasons, as I argue below: 1) the “binding” of distinct observations into a unitary field of experience and 2) the extraordinarily diverse range or “palette” of experiences that human consciousness is capable of apprehending. Nonetheless, both of these unique features of human consciousness can ultimately be derived from the same information-theoretic principle that pervades physics.

In the remainder of this essay, I will discuss a formal definition of consciousness, and will then subsequently address the Binding and Palette problems.

What is consciousness?

To define consciousness, we will first need to elucidate the meaning of an “observation” in physics, based on the argument given above. I don’t think that there’s a consensus definition of “observation,” but I would imagine that most scientists and philosophers would agree that it is inextricably linked to the act of measurement. Of course, measurement is also a general and rather ambiguous concept. It can be performed with a vast range of apparatuses, depending on the context, and pretty much any physical process can be cast as a “measurement.” For instance, if I were to throw a rock at the floor, the state of the rock afterwards serves as an indirect measure of the material that constitutes the floor. The rock would be more or less intact if the floor were made of rubber, but it could shatter if the latter were instead composed of hard metal. Is there a formal principle that unifies all measurements?

Yes. The principle lies in an information-theoretic concept called mutual information. Roughly speaking, the mutual information between a random variable X and another variable Y is the amount of information that can be gained about X by observing Y. To be more precise, the “amount of information” is quantified in information theory as entropy H, which is defined as:

Eq 1. Taken from Wikipedia.

where X is a random variable, P(xi) are the probabilities of individual values of that random variable, and b is the base of the logarithm, which is 2 when entropy is expressed in units of bits. (8) Entropy can be viewed as the number of yes or no questions that need to be asked in order to ascertain the value of a random variable. For instance, in order to determine the state of a fair coin (heads or tails), I would only need to ask one yes/no question: is it heads? Thus, the entropy associated with a coin is 1 bit. Entropy, thus, is a way of quantifying the amount of uncertainty about a variable. Given that providing information about a variable entails a reduction in our uncertainty about it, information is inextricably linked to entropy.

Entropy is explicitly related to mutual information I(X;Y) in the following way:

Eq 2. Taken, again, from Wikipedia.

where H(X|Y) is the entropy of X given the entropy of Y. In the words of neuroscientists Christopher Timme and Nicholas Lapish, the intuition here is that “the total entropy of X must be equal to the entropy that remains in X after Y is learned plus the information I(X;Y) provided by Y about X,” as we can confirm by rearranging Eq 2 such that H(X) is on the left hand side.

Mutual information is a concrete method for quantifying the extent to which one variable measures another. For instance, if X is my body temperature and Y is the temperature measured on my thermometer, then I have a total reduction in uncertainty about X by observing Y, so long as my thermometer is very accurate. Therefore, the mutual information I(X;Y) is maximally high.

Measures of mutual information have widespread applicability in physics, for instance in quantum mechanics. In fact, physicists have shown, unsurprisingly, that a measuring apparatus that is maximally precise in its measurements of particles in the double slit experiment will gain maximal information about those particles. Other physicists have generalized this finding by demonstrating that the reliability of a measuring apparatus in quantum mechanics is proportional to the mutual information between the observed system and the apparatus.

Given that an observation can be defined as an act of maximizing mutual information, I propose that consciousness is, fundamentally, described by mutual information. The crucial implication here is that any physical system that can be cast as a measurement apparatus is conscious on a fundamental level. In quantum mechanics, even air molecules could be viewed as measurement systems, in the sense that they are capable of reducing or “de-cohering” superpositions of quantum states to single, definite values. Therefore, the molecules, along with other sufficiently macroscopic physical systems in the environment, produce an irreversible change in the information that can be encoded about those states.

In the brain, mutual information is encoded by the activity of neurons. At the risk of oversimplification, neurons spike, or fire action potentials, when they are presented with a certain stimulus. While the stimulus is “on,” there is a high level of mutual information between the state of the stimulus and the spike count of the neuron. Indeed, the “Infomax Principle,” developed by neuroscientist Horace Barlow, claims that “early sensory systems try to maximize information transmission under the constraint of an efficient code, i.e. … neurons maximize mutual information between a stimulus and their output spike train, using as few spikes as possible.” Hence, we find neurons that respond selectively to only one stimulus or a very small subset of stimuli, such as neurons in the medial temporal lobe that appear to fire only in response to images (and other visual representations) of Jennifer Aniston.

Perhaps the defining feature of consciousness is not merely the act of observation itself but rather the subjective feeling of what it’s like to observe something, also known as qualia. It is this latter quality of consciousness that makes it so hard to explain with a scientific theory. Can we specify the qualia associated with fundamental consciousness of mutual information? In other words, what does an air molecule feel when it “measures” a quantum system, or a camera when it captures an image of a chess board? By seeking answers to these questions, we are inevitably wandering into speculative territory. (See footnote 9 for some conjectures.) However, I don’t think that discussions about the qualia associated with arrays of mutual information are inherently speculative. The right philosophical assumptions about consciousness imply that we can give objective descriptions of qualia. In particular, qualia structuralism indicates that the mathematical object corresponding to consciousness “has a rich set of formal structures,” according to philosopher Mike Johnson of the Qualia Research Institute (QRI), who developed this view. These structures likely set constraints on qualia, thereby ruling out the possibility of certain experiences. For instance, some combinations of auditory and olfactory information may be impossible to process, and would therefore be inaccessible to consciousness.

It is worth noting that I don’t believe consciousness monotonically “increases in magnitude,” whatever that means, as the corresponding system encodes more bits of mutual information. For instance, it takes 6 bits of information to represent an object’s position on an 8×8 chess board, whereas, as discussed above, it only takes 1 bit to encode the state of a coin. Does that mean that a system that is able to measure a chess board is somehow more conscious than one that measures coin flips? Certainly not. As Andrés Gomez Emilsson, director of research at QRI, has said, a system only becomes more conscious when it is capable of binding together bits of mutual information. In fact, it would be more accurate to state that a unit of consciousness is an array of mutual information bound by gauge invariance / coherence, two concepts that I will elucidate below. So, I will now turn my attention to the issue of phenomenal binding (“phenomenal” is synonymous with “conscious”). But first:

A brief note on Marr’s levels of analysis

Neuroscientist David Marr famously distinguished between three levels of analysis:

Computational: “What is the goal of the computation [and] why is it appropriate?”

Algorithmic: “How can this computational theory be implemented? In particular, what is the representation for the input and output, and what is the algorithm for the transformation?”

Implementational: “How can the representation and algorithm be realized physically?” (A5)

It is important not to confuse the three levels and to be clear about which of the levels a theory is intended to address. While I will not delve into the goals of the computations underlying the solutions to the Binding and Palette Problems, I will discuss each of them from both an algorithmic and implementational perspective. In the context of neuroscience, the algorithm, roughly speaking, can be viewed as the “code” that the brain is running, and the implementation consists of the neuronal mechanisms that the brain recruits in order to execute the code. (10)

The Binding Problem

The Binding Problem asks: how do distinct objects of experience get bound together into a single, unified consciousness? In other words, why do I have one consciousness of all the objects in my perceptual field – the sight of my laptop, the sound of the air conditioner in my room, the feeling of my feet touching the floor – rather than a separate consciousness of each one?

I first started writing about the Binding Problem last summer while working at QRI, noting that it is highly nontrivial. Modern neuroscientists who research the problem have tended to argue that the synchronization of neural activity is responsible for the binding of the conscious experiences encoded by that activity. QRI has argued, for reasons that I discussed back then, that this explanation does not offer a satisfying resolution to the problem. In December, I suggested that binding implies invariance in the mathematical structure that corresponds to consciousness. Essentially, when different objects of experience are bound together, there is a single structure that underlies all of them. Each possible type of experience is encoded by a distinct set of coordinate axes within that structure. Furthermore, binding doesn’t happen instantaneously, even though it appears otherwise; strictly speaking, I am not conscious of both sight and sound at the same time, but rather my attention switches very rapidly between the two. So, binding actually unfolds over a series of very brief moments, during which the mathematical structure of consciousness rotates between the corresponding coordinate axes. In this way, the structure remains the same, while nevertheless encoding a variety of objects of experience.

I initially proposed that the Hilbert space is the mathematical structure of consciousness (A6), but really the most essential idea of that essay was the claim that different conscious experiences can be represented as a set of transformations to, e.g. rotations of, the mathematical structure of consciousness. Binding entails that the structure is invariant under those transformations. This section will synthesize my ideas about mutual information with the claim that binding is described by a symmetry in the mathematical object of consciousness.

Algorithmic level of analysis

As physicist Victor Stenger explains, rotations of coordinate axes are sometimes referred to in physics as gauge transformations, and symmetries under those transformations are called gauge invariances. (A7)

For instance, consider a vector v situated in a coordinate system with axes x1 and y1.

Fig 1.

If I were to rotate the coordinate system by an angle 𝛼, then the coordinate system shifts to a new set of axes x2 and y2.

Fig 2.

Importantly, the vector’s magnitude and direction do not change under the rotation of the coordinate axes. Thus, the vector exhibits gauge invariance.

In physics, invariances under coordinate transformations also imply that a mathematical function known as the Lagrangian remains constant. (See footnote 11 for a mathematical explanation of the relationship between coordinate rotations and the Lagrangian.) In classical mechanics, the equations of motion for a system can be derived from the Lagrangian, once something known as the Euler-Lagrange equation is applied to it. Rotations are only one type of transformation under which the Lagrangian stays the same, and in fact the invariance of the Lagrangian is the true, defining essence of gauge symmetry. This invariance, I argue, underlies phenomenal binding.

There is one other property of the Lagrangian that I have to mention before we will be ready to explore its relationship with phenomenal binding. Namely, minimizing the Lagrangian causes physical systems to take the shortest path from one point to another, either in physical, 3D space or a configuration space. As Encyclopedia Britannica states, “One may think of a physical system, changing as time goes on from one state or configuration to another, as progressing along a particular evolutionary path, and ask, from this point of view, why it selects that particular path out of all the paths imaginable. The answer is that the physical system sums the values of its Lagrangian function for all the points along each imaginable path and then selects that path with the smallest result. This answer suggests that the Lagrangian function measures something analogous to increments of distance.” Computing the shortest path, or “geodesic,” is trivially easy when the surface is flat – it’s a straight line between the origin and the destination – but the calculation becomes harder when the surface is curved. For instance, Fig 3 demonstrates that, due to the curvature of the Earth, the geodesic from California to Germany is not a straight line on a 2D map, but rather a curved trajectory.

Fig 3. The “geodesic,” or shortest path, from California to Germany. Image taken from here.

Furthermore, if the surface is not static, then the geodesic between any two points may also be subject to change over time. (12) But if the Lagrangian is invariant, then the shortest path stays the same despite the transformations. Thus, gauge invariances ensure that a system traversing a curved surface will always take the geodesic path even when the surface is perturbed.

This discussion is relevant for consciousness because the mathematical structure corresponding to mutual information is a curved “manifold” (a generalization of surfaces to higher dimensions), for reasons that I will elaborate on below. In brief, I claim that peaks on the manifold correspond to points with maximally high mutual information. Whenever a new perceptual object enters a person’s consciousness, the manifold is perturbed, such that the locations of the peaks are rearranged. (The heights of the peaks may also change.) Consequently, the brain has to compute the shortest distance to the new peaks.

Biswa Sengupta’s paper “Towards a Neuronal Gauge Theory”, co-authored with Karl Friston, the creator of the Free Energy Principle, provides significant insight into the mutual information manifold, while also explaining why it would be curved. (It is important to caveat that the manifold described in the paper is actually a manifold defined over free energy, not mutual information. Nevertheless, Sengupta’s reasoning still applies.) The manifold defines a quantity of mutual information for each point in a configuration space of probability distributions. Why probability distributions? Recall that the entropy of a random variable X, as well as the mutual information between X and another variable, is calculated by summing the probabilities of different values of X. So, in order to determine the entropy of a random variable, we need to know its probability distribution. In the brain, the relevant random variables are the firing rates of neurons (13), and the probability distributions encode the likelihood that neurons will fire in response to a certain stimulus. If we assume that all the distributions are Gaussian (more commonly known as “the bell curve”), then the space of all possible distributions, or the configuration space, is 2D, since all Gaussian distributions are fully characterized by two parameters: their mean and their variance. When an amount of mutual information is associated with each distribution, then a 3D manifold arises. This manifold is curved because, as Sengupta notes, the space of Normal distributions is endowed with a hyperbolic, rather than a (flat) Euclidean, geometry.

Each object of consciousness is associated with a different mutual information manifold. For instance, when I consciously perceive an image of Jennifer Aniston, a particular set of neurons is activated: a single neuron in the medial temporal lobe, as previously discussed, in addition to neurons in, say, the inferior parietal lobe, which would regulate my emotional response to Jennifer Aniston’s face; the precuneus, which would retrieve memories about Jennifer Aniston; and other regions of the brain. Each of the activated neurons is maximizing mutual information, so the probability distributions encoded by those neurons will become peaks in the mutual information manifold. (14) However, when I simultaneously perceive a different perceptual stimulus, such as a fishy odor, a distinct set of neurons will be activated, and hence the peaks of the manifold will shift to a new set of points (Fig 4). In order to bind the fishy odor to the image of Jennifer Aniston in my consciousness, the brain must transition very quickly between the two configurations of neuronal activity, thereby giving rise to the illusion that I am aware of both perceptual stimuli at the same time. So, the brain will be inclined to take the shortest path from the old set of peaks to the new one. To be more precise, “distances in the curved geometry” of the manifold, according to Sengupta, “correspond to the relative entropy in going from one point on the … manifold to another.” Therefore, the geodesic minimizes gains in entropy.

Fig 4. What is the shortest path from the old set of peaks (A, B, C) to the new set of peaks (A’, B’, C’) on the mutual information manifold? (Note that the manifold should be curved.) This is the optimization problem that consciousness is constantly solving. Each set of peaks corresponds to the configuration of neurons that is maximally active when a certain object of consciousness is perceived. For instance, {A, B, C} could represent the neurons that are activated when I see an image of Jennifer Aniston. {A’, B’, C’} could encode the neurons that fire when I simultaneously smell a fishy odor. The image of Jennifer Aniston and the smell of the fishy odor are bound together in my consciousness since they are perceived simultaneously.

Implementational level of analysis

Recall that the shortest path on the mutual information manifold is the one that minimizes relative entropy the most. Moreover, there is a direct correspondence between bits of entropy and energy. Neuroscientist Simon Laughlin calculated that the energetic cost of transmitting one bit of information across a neuronal synapse is 5 * 10-4 Joules, which is the amount of energy contained in 104 molecules of ATP. Thus, the physical implementation of a reduction in relative entropy is a proportionate decrease in relative energy; in other words, the brain operates in accordance with a principle of minimizing energy loss.

It is worth pausing here to note a connection between these ideas and two other concepts in neuroscience. The first is the notion of an energy landscape, which I wrote about in last month’s blogpost. If we redefine the energy landscape such that it is parametrized in terms of probability distributions rather than populations of neurons, then there is a one-to-one mapping, or “isomorphism,” between the mutual information manifold and the energy landscape. Last month, I contended that valence, or the quality of a person’s conscious experience, depends on structural features of the energy landscape, namely its “ruggedness.” Due to the isomorphism, tuning the ruggedness of the mutual information manifold should also modulate emotional valence. The second concept is the Free Energy Principle, which states that the brain minimizes free energy. Free energy is computed as the difference between two quantities: first, the probability of receiving a sensory stimulus given a certain model, and second, a measure that is analogous to the mutual information between the probability distribution encoded by the brain and the true distribution of the sensory stimulus. My claims about mutual information, therefore, share a great deal in common with the Free Energy Principle, and in fact, its creator, Karl Friston, states that it “can be regarded as a probabilistic generalization of the infomax principle.” Importantly, however, the Free Energy Principle is not a theory of consciousness, as Friston believes that consciousness is somewhat of an illusion.

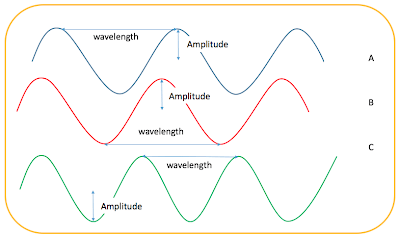

What are the neural mechanisms underlying the minimization of energy loss? One promising candidate is neural coherence, or coherence between oscillations produced by the brain. (A8) Coherence, in general, is a phenomenon in which two waves have identical frequencies and waveforms but are separated by a constant phase difference, meaning that the waves are the same but one is shifted relative to the other by a fixed amount. Thus, waves A and B in Fig 5 are coherent, but wave C is coherent with neither, as C has a shorter wavelength than both A and B.

Fig 5. Coherence. Image taken from here.

Crucially, coherence is a highly energy-efficient mode of communication; when energy is transferred from one wave to the next, very little is lost in the process. As physicist Mae-Wan Ho explains, “an intuitive way to appreciate how coherence affects the rapidity and efficiency of energy transfer is to think of … a line of construction workers moving a load. As the one passes the load, the next has to be in readiness to move it, and so a certain phase relationship in their side-to-side movement has to be maintained. If the rhythm (phase relationship) is broken … much of the energy would be lost and the work would be slower, more arduous, and less efficient.”

Neuroscientist Pascal Fries has advanced the theory that populations of neurons communicate by forming coherent patterns of oscillations with each other. (Oscillations arise from alternations between neural excitation and inhibition.) His reasoning evokes Ho’s analogy; in order for neuron A to send a signal to neuron B, neuron A has to time its transmission such that the signal will arrive at neuron B when the latter is in the excitable phase of its oscillation. Maintaining this phase relationship is essential for securing the transmission of a signal down a chain of neural populations, as well as for preserving energy. Typically, the inhibitory phase is longer than the excitatory phase, thereby ensuring that competing signals do not get transmitted.

Surprisingly, mutual information has not really been studied in relation to neural coherence. Nevertheless, it is possible to link the two. Information transmission, measured using mutual information, is maximized when there is an intermediate ratio of excitatory to inhibitory inputs in the brain. According to neuroscientist Woodrow Shew, a large excitatory/inhibitory (E/I) ratio yields high correlations in neural activity, since exciting one neuron is likely to excite many other neurons. Yet excessive correlations limit the amount of information that can be transmitted, since periods of inhibition enable different signals to be distinguished from one another. However, a low E/I ratio significantly reduces overall activity in the brain, which prevents neurons from encoding information about the environment. Thus, an intermediate E/I ratio is most conducive to information transmission. Moreover, neural oscillations arise when excitation is proportionately counterbalanced with inhibition, as would occur when the E/I ratio is tuned to a moderate level. By Fries’ argument, coherence between those oscillations facilitates the transfer of mutual information across neural populations.

In summary, neural coherence communicates information from one configuration of neuronal activity to another. Different configurations correspond to different objects of consciousness; some may encode an image, others may encode a sound, and so on. Additionally, each configuration perturbs the mutual information manifold, producing a distinct set of peaks. Mathematically, “communicating information” entails traveling from one peak to another on the manifold. By minimizing energy loss, coherence ensures that the brain will take the shortest path between the two peaks. If the brain always follows this “geodesic” on the manifold, then it is likely that the Lagrangian stays constant, meaning that consciousness respects gauge invariance. Since I postulate that this invariance underlies phenomenal binding, neural coherence is responsible for the unity of consciousness.

Now, it may appear that I am contradicting myself. After all, coherence is essentially one form of neural synchronization, and at the beginning of this section, I did criticize existing theories of phenomenal binding precisely on the grounds that neural synchronization, in principle, cannot give rise to the unity of consciousness. However, those theories are faulty because they do not tie coherence to invariances in the mathematical structure of consciousness. When this connection is made clear, then a coherence-based account of phenomenal binding becomes valid.

The Palette Problem

The Palette Problem asks: how do we explain the extraordinarily vast repertoire of experiences that human consciousness is capable of having? Even the olfactory system can, on its own, detect more than 1 trillion smells. (A9) Yet the five senses only disclose a thin slice of the state space of human consciousness. Not only is there the depth and complexity of thought and emotion, but also the rich, “exotic” qualia that are accessible through psychedelics and other psychoactive substances, which often transcend the language that we typically use to categorize conscious experiences. However, theorizing about the entire state space of consciousness is extremely ambitious, so the discussion in this section will be limited to the five senses.

On the other hand, the repertoire of experiences that are available to fundamental consciousness is very small. Electrons, chairs, and rocks may only have one type of experience, which is the MPE discussed above. How do we bridge the gap from fundamental to human consciousness?

Algorithmic level of analysis

Since consciousness is described by a mathematical object, all properties of consciousness should be tied to some feature of the mathematical object. The phenomenal palette, I propose, is linked to a topological feature of the mutual information manifold, namely its bottlenecks. (A10) A bottleneck is any region of a network that limits the spread of activity. As Shew notes, one example of a bottleneck in information theory is a processing unit that receives information from many different inputs but has an output of at most 1 bit. In other words, this unit can only have one of two states.

Fig 6. Visual metaphor for a bottleneck. Image taken from here.

Such bottlenecks reduce a complex array of information to a single, binary statement. For instance, consider a population of information-processing units that is trying to determine whether or not a scent is fragrant. The bottleneck unit in this population will integrate the inputs from the rest of the population in order to render a yes-or-no judgment about the scent. In turn, the output of the bottleneck gets transmitted to other processing units of the brain, which incorporate it with other sensory data (e.g. data about vision).

If we were to draw a directed graph of a toy model of this system, it may look something like Fig 7. Note that a) once again, a graph is referring to a set of nodes connected by edges, and a directed graph specifies the direction of those connections; b) the graph is a discretization of the mutual information manifold, since it “decomposes” the continuous manifold into a set of discrete nodes.

Fig 7. Graph of a bottleneck that then relays its output to other information-processing units in the system.

Importantly, the bottleneck (call it node A) and the unit to which it relays information (B) do not share any common neighbors. In other words, A and B are joined by an edge, but none of the nodes connected to A are themselves linked to any of the nodes connected to B. In the terminology of networks, we would say that A has a low clustering coefficient. The clustering coefficient, which is in itself a topological feature of any graph, is “the probability that any two neighbors of a node are also neighbors of each other,” according to researchers at the Santa Fe Institute. It can also be linked to a topological measure of mutual information, as illustrated by neuroscientist Shauizong Si. In particular, the clustering coefficient is mathematically related to the mutual information between the event that two nodes A and B are connected by a link, and the event that A and B have common neighbors. (See footnote 15 for the details.)

On the other hand, the neighbors of the bottleneck may have a high clustering coefficient. (Indeed, note that Fig 7 is incomplete, as the neighbors are themselves connected to other nodes.) This property would hold true under the assumption that information-processing units for certain categories of experience cluster together in the brain, as we will see in the implementational analysis. Indeed, the olfactory units that are processing information about whether an odor is fragrant may be clustered with units that are computing whether or not the same smell is, say, coppery. These latter units will converge on a bottleneck that renders a binary judgment about the “coppery-ness” of the odor.

Fig 8. Graph of two bottlenecks. Note the high clustering coefficient in the region where the bottlenecks are connected to each other. The red line separates clusters (the top half of the graph is meant to be just as densely interconnected as the bottom half; I just neglected to draw the edges).

So, distinct bottlenecks encode distinct outcomes of processing information, which correspond to different qualia. The flow of edges from bottlenecks across clusters then projects the qualia to the rest of the brain.

However, it may seem that phenomenal distinctions should not arise from different bottlenecks. Ultimately, bottlenecks are implemented as neuronal circuits or populations that integrate many inputs and output a binary signal. If all bottlenecks can be reduced to a binary signal, then why don’t all of those signals feel the same? Why, in our experience, do “fragrant” and “coppery” aromas smell differently? This is a difficult question that gets right at the heart of the Hard Problem of Consciousness. I would tentatively respond to this objection by claiming that different bottlenecks participate in clusters with distinct topological measures of mutual information. Thus, qualia are characterized not only by the outcome of information processing, i.e. the bottleneck, but also by the topology of the network that processes the information, i.e. the cluster. Nevertheless, one could contend that this response is faulty because it implies that a computer circuit would have a broad phenomenal palette so long as it has lots of bottlenecks and sub-circuits with many different topological measures of mutual information. This contradicts my own intuitions about consciousness, and I hope to have a more airtight account of the phenomenal palette in subsequent blogposts.

There is an intriguing connection between bottlenecks and harmonic analysis of networks, which I discussed in Part I of this essay series. According to the Cheeger inequality, the eigenvectors of the Laplacian matrix of a graph, which encode its harmonics, set upper and lower bounds on a measure that defines the smallest bottleneck of the graph. (See footnote 16 for the technical details.)

Implementational level of analysis

Information-processing clusters in the brain are implemented through selective signal enhancement, in which neurons compete with each other to broadcast their “interpretation” of a stimulus. Neuroscientist Michael Graziano compares these neurons to “candidates in an election, each one shouting and trying to suppress its fellows. At any moment only a few neurons win that intense competition, their signals rising up above the noise and impacting the animal’s behavior.” I suspect that bottlenecks in the connectome, which is the structure of all the connections in the brain, determine the winning signal and then broadcast it to consciousness.

The principle of functional localization, while highly flawed, yields the empirical observation that neurons cluster together into regions that are specialized for certain functions, rather than being spread out diffusely across the brain. Hence, we find that a high clustering coefficient in the connectome reflects functional segregation. In fact, neuroscientists have shown that the clustering coefficient in olfactory networks is correlated with the ability to distinguish between different smell qualia, i.e. odors.

Footnotes

(1) The most fundamental group in physics is the Poincaré group, which describes spatial rotations, displacements in space-time, and “boosts.” The numbers that characterize fundamental particles – namely, their masses and their spins – are invariant under the transformations of the Poincaré group. Since a fundamental particle has no intrinsic physical properties other than mass, spin, and a few others, it follows that a particle is a representation of the Poincaré group.

(2) To quote physicist John Baez, “there’s a close relation between “vacuum states” and representations of C*-algebras. In fact there’s a theorem called the GNS construction which makes this precise. But physically, what’s going on here? Well, it turns out that to define the concept of “vacuum” in a quantum field theory we need more than the C*-algebra of observables: we need to know the particular representation. This becomes most dramatic in the case of quantum field theory on curved spacetime – a warmup for full-fledged quantum gravity. It turns out that in this setting, it’s a lot harder to get observers to agree on what counts as the vacuum than it was in flat spacetime. The most dramatic example is the Hawking radiation produced by a black hole. You may have heard pop explanations of this in terms of virtual particles, but if you dig into the math, you’ll find that it’s really a bit more subtle than that. Crudely speaking, it’s caused by the fact that in curved spacetime, different observers can have different notions of what counts as the vacuum! And to really understand this, C*-algebras are very handy.”

(3) For example, the rule:

{{x, y}, {x, z}} → {{x, z}, {x, w}, {y, w}, {z, w}}

transforms this graph

into a new graph

When this simple rule is iteratively applied many times, graphs of increasing complexity arise.

(4) A proponent of the Mathematical Universe Hypothesis might concede that math is not equivalent to the universe, yet nevertheless still contend that an individual observer’s experience of the universe is mathematical in nature. It is certainly true that, given a certain mode of description (or frame of reference in rule space, as Wolfram would put it), math will explain physical phenomena in the universe. Yet math does not really explain why observers choose a particular mode of description in the first place. One has to appeal to facts outside of mathematics in order to account for the observer’s frame of reference. This phenomenon can be illustrated through an example relating to physical space. Given that a person is accelerating at 5 miles per hour, and thereby choosing a certain frame of reference, the (mathematical) laws of physics anticipate that he will experience spacetime in a certain way. However, the fact that he is traveling at 5 miles per hour is not an inherently mathematical statement. Rather, it is an observation that we could model with mathematical tools like calculus.

(5) Andrés Gomez Emilsson, director of research at the Qualia Research Institute, subscribes to this view as well.

(6) The Integrated Information Theory of consciousness, developed by neuroscientist and polymath Giulio Tononi, is by far the best candidate for a panpsychist theory rooted in science. As far as I know, Tononi did not have panpsychism in mind when he was originally developing the theory, but it nevertheless does imply that inanimate entities are capable of “micro-consciousness.”

(7) This may be completely false. We are far too early in the stages of developing a theory of panpsychism to know whether physical laws like F = ma have any bearing on consciousness.

(8) This notion of “random variables” and their “values” may be foreign to you if you’ve never taken a university-level class on probability. An example of a random variable is the state of a coin. It can take on two values: heads or tails.

(9) I would venture that it shares something in common with the “minimal phenomenal experience” (MPE) defined recently by philosopher Thomas Metzinger (“phenomenal” is synonymous with “conscious”). The MPE is characterized more by what it is not than by what it is. (There’s some deep connection here between MCE and apophatic theology, which I have discussed previously on this blog.) It is non-sensory, non-motor, atemporal, non-cognitive, non-egoic, unbounded (in other words, attention isn’t directed anywhere in particular), and aperspectival. We should expect all these properties to hold for fundamental consciousness, since air molecules obviously do not have any motor, sensory, or attentional capacities. Metzinger positively describes the MPE as “a mostly transparent experience of openness to the world, in combination with an abstract, non-conceptual representation of mere epistemic capacity, plus a spontaneously occurring, domain-general [expectation of future epistemic states] – but as yet without object.” I think this account of the MPE attributes too much to fundamental consciousness, especially in the context of panpsychism; how could air molecules possibly be capable of predicting their future states? Metzinger is more on the mark when he presents first-person reports of the MPE: it is a state in which “I just know that I am,” or in other words, a state of total, pure presence. Such accounts inevitably conjure to mind mystical experiences, which may prompt a skeptical reaction from hard-nosed materialists who don’t believe that spirituality has any bearing on consciousness. I disagree, though a detailed rebuttal is outside the scope of this footnote.

(10) You may have noticed that the definition of consciousness that I provided is algorithmic, and does not give any details on the implementation. I appear to imply that the implementation, i.e. the underlying physical substrate, of mutual information doesn’t actually matter. I actually do not believe this; I do think that most material substances and processes exclude the possibility of binding and expanding the phenomenal palette.

(11) In quantum field theory, the Lagrangian for a fermion is the following:

Eq. 3

There is a lot of math in this equation that I frankly don’t understand, but here’s the important point: if I rotate the quantum state vector 𝜓 by an angle 𝛼, then its complex conjugate, 𝜓¯ (the bar should be directly over the symbol), will be rotated by -𝛼, and L will stay the same. Hence, the Lagrangian is invariant under rotations of coordinate axes.

(12) For example, if I were to place an ant on a blanket and then shake the blanket such that it ripples and undulates in a very irregular fashion, then the geodesic between any two points would constantly be changing.

(13) The firing of neurons is not the only process that encodes information in the brain. Others include synaptic gain, functional connectivity, structural connectivity, and more.

(14) This analysis may not be true since the Infomax Principle only really holds for neural activity in sensory areas. The inferior parietal lobe, the precuneus, and many of the other regions in the “Global Workspace” that is activated by conscious experience are not primarily involved in sensory processing.

(15) The clustering coefficient is equivalent to the probability that a link exists between two nodes m and n given that they share a common neighbor z:

Eq. 4

where, to quote Si, “Γ(z) represents neighbors set of node z, |Ez|denotes the existing link number in |Γ(z)|.”

Additionally, the mutual information between the event that there is a link between nodes u and v and the event that they share a common neighbor z is:

Eq. 5

where “Γ(z) is the neighbor set of z; I(L1m,n) represents the self-information of an event that node m and node n are connected; and I(L1m,n|z) is used to describe the conditional self-information of the event that node m and node n are connected on the condition that node z is known as one of their common neighbors.”

Plugging Eq 4 into Eq 5, we get:

Eq. 6

(16) The term Φ(S) defines how much of a bottleneck the set S is. In particular, Φ(S) = |E(S)|/|S|, where |E(S)| is the number of edges that exit the set, and |S| is the size of the set.

Additionally, the smallest bottleneck of the graph G (of which S is a set) is:

Φ*(G) = min|S|≤ n/2 Φ(S)

Eq. 7

Given eigenvalues of G λ1 ≤ λ2 ≤ … λn, the Cheeger inequality states that

λ2/2 ≤ Φ*(G) ≤ sqrt(2*λ2)

Eq. 8

Acknowledgments

(A1) The term “full-stack approach,” in relation to consciousness research, was coined by Mike Johnson, CEO of the Qualia Research Institute.

(A2) I was first made aware of the Binding Problem and the Palette Problem through my internship(s) at QRI.

(A3) QRI has explored the use of a graph formalism in order to model low-hanging fruit properties of consciousness. QRI believes a full formalism of consciousness will require a richer mathematical object than graphs.

(A4) While QRI is officially agnostic about most metaphysical positions on consciousness (aside from two claims, “qualia formalism” and valence structuralism”), it does agree more with panpsychism than other philosophies, such as functionalism and eliminativism. Note that QRI and I independently arrived at the conclusion that panpsychism is the true philosophy of consciousness.

(A5) QRI introduced me to Marr’s levels of analysis last summer.

(A6) Johnson has also speculated that “qualia space” may be a Hilbert space, and he and I shared a few conversations about this last summer.

(A7) Gauge invariances are related to Noether’s Theorem, which suggests that every symmetry of a system described by a Lagrangian is associated with a conserved quantity. Johnson has suggested that applying Noether’s Theorem to the study of consciousness could yield many important discoveries.

(A8) My writing on coherence was influenced by research that Emilsson has recently conducted on the topic. In fact, the effect of Emilsson’s thinking on my own was more significant than I initially realized or acknowledged. Emilsson pointed out to me that phase-locking, a phenomenon closely tied to coherence, maximizes energy efficiency.

(A9) Thank you to Emilsson for informing me that the state space of smells is so enormous.

(A10) Emilsson has argued that topology is relevant for fundamental properties of consciousness, such as binding. He has also stated that the topology of a person’s “world-sheet,” which encodes the semantic content of his conscious experience, bifurcates during a breakthrough DMT experience. Finally, one of Johnson’s “Eight Problems of Consciousness,” as discussed in “Principia Qualia”, is the “Topology of Information Problem,” which asks: “how do we restructure the information inside the boundary of the conscious system into a mathematical object isomorphic to the system’s phenomenology?”