1,900 words

Sign up for the Blank Horizons mailing list here.

Header image taken from here.

Editor’s Note

For another overview and critique of Integrated Information Theory, take a look at Mike Johnson’s “Principia Qualia“.

Intro

In this blog, I’ve made lots of speculative conjectures about the mathematical structure of consciousness without carefully reviewing and discussing the existing literature on the science of consciousness. Hence, I’ve figured that I should write about the most promising scientific theory of consciousness, Giulio Tononi’s Integrated Information Theory (IIT). While IIT is still very controversial, it does have plenty of empirical support and therefore serves as a more compelling foundation for the study of consciousness than my untested – and perhaps untestable – hypotheses. In this essay series, I’ll be giving a broad overview of Integrated Information Theory (part I) and then critiquing various aspects of it (subsequent parts).

Integrated Information Theory: nuts and bolts (axioms and postulates)

IIT stems from five axioms about the nature of consciousness:

(A1) Consciousness is intrinsic; that is, from my perspective as a conscious being, it is “immediately and absolutely evident” that I am conscious. There is nothing that is more demonstrably false than the claim that I am not conscious, for all of my knowledge, including the knowledge that I exist, is predicated on the fact of my consciousness.

(A2) Consciousness is compositional; it is composed of elementary perceptual phenomena that can be combined into higher-order experiences, such as a grain of sand in the visual percept of a beach.

(A3) Consciousness is informative; each experience contains information by virtue of the fact that it differs from other ones in specific ways. My experience of my laptop, for instance, contrasts with my experience of vanilla ice cream.

(A4) Consciousness is integrated; each experience is unitary and cannot be divided into smaller “sub-experiences.” When I look at my laptop, I cannot simultaneously have two separate experiences of the screen and the keyboard; at one moment in time, I will have a single experience of the laptop. (See previous blogposts for more discussion of this topic.)

(A5) Consciousness is exclusive; each experience has clear boundaries that exclude other experiences. My visual field, for instance, ends at the perimeter of my room, so I cannot experience any visual phenomena outside of it. In other words, my consciousness does not consist of a superposition of qualia both inside and outside my room.

From these five axioms, IIT derives five corresponding postulates about the causal properties of the physical substrate of consciousness (PSC):

(P1) The PSC must have intrinsic cause-effect power; it must be able to cause an effect to itself, that is, change itself. As Tononi writes, “A minimal system consisting of two interconnected neurons satisfies the criterion of intrinsic existence because, through their reciprocal interactions, the system can make a difference to itself.”

(P2) Individual elements of the PSC can be combined, but both the elements and their aggregates must have cause-effect power.

(P3) The PSC must encode a cause-effect structure, which consists of all the cause-effect repertoires corresponding to the mechanisms of the PSC. The cause-effect repertoires are probability distributions of the past and future states of the system given its current state or “mechanism.” The mechanism, to be precise, is a configuration of elements that has cause-effect power on the PSC. A mechanism is more informative if it constrains the cause-effect repertoire of the PSC; indeed, if a mechanism could be caused by any set of elements and could have any kind of effect on the PSC, then it contains zero information.

(P4) The cause-effect structure of the PSC must be irreducible to the cause-effect structures corresponding to independent subsets of the PSC. Irreducibility, measured as integrated information or Φ, signifies the level of integration in the PSC, and more specifically the extent to which the whole of the PSC is greater than the sum of its parts. For example, the information in a book has zero Φ because it is totally reducible; if I were to tear out a page of the book, the sum of the information in that page and the rest of the book is equivalent to the total information in the book in its original state.

(P5) A single set of elements can encode multiple cause-effect structures, but only one of these can correspond to the contents of consciousness, since A5 indicates that one experience excludes others. This cause-effect structure is the one that is maximally irreducible.

Thus, a set of elements that satisfies all five of these postulates is a maximally irreducible cause-effect structure, which Tononi also refers to as a “conceptual structure.” (He defines a concept as a cause-effect repertoire that is maximally irreducible.) An experience is therefore identical to a conceptual structure.

An example

These axioms and postulates are very abstract. What exactly is an element of a PSC? What is a cause-effect structure? Hopefully the following example, which is provided in Tononi’s 2014 paper “From the Phenomenology to the Mechanisms of Consciousness: Integrated Information Theory 3.0”, will resolve some confusion.

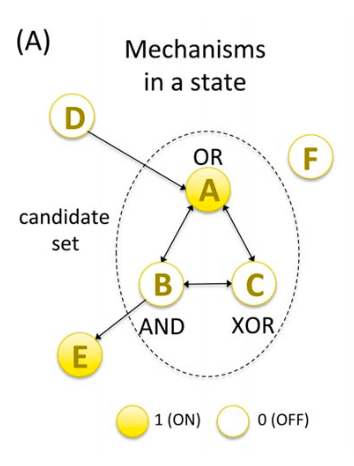

Consider a system of six logic gates A, B, C, D, E, and F, with three mechanisms: AND, OR, and XOR (Fig 1). Logic gates are circuits with at least one input and exactly one output. (Neurons can be modeled as logic gates.) AND computes the intersection or conjunction of two inputs; for instance, if input A is 1 and input B is 0, A AND B is 0, but if input A is 1 and input B is 1, then A AND B is 1. OR computes logical disjunction, so if A is 1 and B is 0, then A OR B is 1. Finally, XOR computes the “exclusive or” operation; it outputs a 1 only if exactly one of the inputs is 1. The system satisfies the five postulates as follows:

(E1) Due to its reciprocal connections, the system is able to make a difference to itself, as Tononi puts it, by triggering certain elements to turn on and off. Hence, the system has intrinsic cause-effect power.

(E2) Elementary mechanisms A, B, and C can be combined into higher-order mechanisms AB, BC, AC, and ABC.

(E3) The information conveyed by a mechanism is the extent to which the mechanism constrains or reduces uncertainty about the past and future states of the system. The repertoire of past states, given a certain mechanism, is referred to as the cause repertoire of that mechanism. Similarly, the repertoire of future states, given the mechanism, is the effect repertoire of that mechanism. The cause (effect) information is the distance between the cause (effect) repertoire and the unconstrained past (future) of the system. For instance, when mechanism A is turned on, its cause repertoire is {p(000) = 0, p(100) = 0, p(010) = p(110) = p(001) = p(101) = p(011) = p(111) = 1/6}. (In each of these variables, the first digit represents the on-off state of A, the second digit the state of B, and the third digit the state of C.) The unconstrained cause repertoire – that is, the past state of the system without knowledge of A’s state – is simply the uniform distribution {p(000) = p(100) = p(010) = p(110) = p(001) = p(101) = p(011) = p(111) = 1/8}. The distance between these two repertoires, i.e. the cause information, is computed via the EMD algorithm, which is rather complicated and, for the sake of brevity, won’t be defined here. (See the following link for more information.) Once the corresponding calculations are performed for the effect information, the cause-effect information is computed as the minimum of the cause and effect information. Insofar as the cause-effect information of a mechanism is greater than zero, the mechanism is informative. The cause-effect structure of the system can subsequently be determined by calculating the cause-effect information for all the constituent mechanisms.

(E4) In order to compute the irreducibility of the system, we must first define what it means to reduce the system into independent parts. In IIT, reduction is specified by the minimum information partition (MIP), which is, according to Tononi, “the partition that makes the least difference to the cause and effect repertoires.” If the information of the whole mechanism is greater than the information of the mechanism partitioned along the MIP, then the system is irreducible; however, if they are equal, then the system is reducible. In our example system, given that A is the current mechanism of the system, the MIP partitions the system’s past states into AB and C and the future states into AC and B. The cause repertoire is then evaluated as the probability of each of these partitioned states given a partition of the current mechanism. (A can be partitioned into AB = 10 and C = 0, for instance. Honestly, I am not too sure why Tononi partitions the current mechanism in the way that he does.) Hence, the cause and effect repertoires of the partitioned mechanism are as follows, respectively (note that the superscripts p, c, and f refer to past, current, and future):

p(ABCp|Ac)/MIP = p(Cp|ABc = 10) x p(ABp|Cc = 0)

p(ABCf|Ac)/MIP = p(ACf|ABCc = 100) x p(Bf )

The irreducibility φ of the cause (effect) repertoire of a mechanism is quantified as the distance between the cause (effect) repertoire of the whole mechanism and the cause (effect) repertoire of the partitioned mechanism, i.e. φcause = D(p(ABCp|Ac) || p(ABCp|Ac)/MIP) and φeffect = D(p(ABCf|Ac) || p(ABCf|Ac)/MIP), where || means “between.” The total φ of the mechanism is then the minimum of φcause and φeffect.

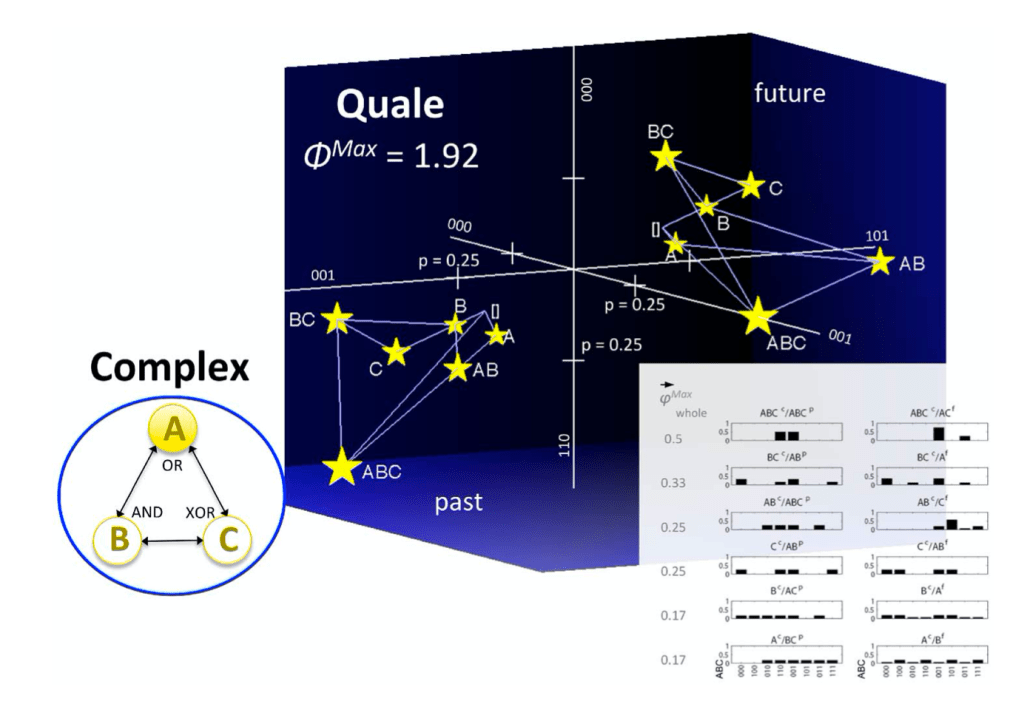

(E5) The maximally irreducible cause (effect) repertoire of a mechanism is determined by the past (future) state that maximizes φcause (φeffect) for the mechanism. In our example system, these past and future states are BC and B, respectively. φMax is then the minimum of φcauseMax and φeffectMax.

Note that, in this section, I have actually restricted the discussion to single mechanisms rather than systems of mechanisms. The methods of computing the same quantities, e.g. φMax, differs in systems of mechanisms, but the underlying principles are the same.

In Part II of this essay series, I will begin my critique of IIT, in particular focusing on IIT’s notion of causation.

Technical Appendix A

Note: This technical appendix pertains to a claim that I made in another blogpost, “A Better Turing Test?”

IIT argues that an experience is identical to a maximally irreducible conceptual structure (MICS) specified by a set of elements (Fig A1). We can determine the conditions for resonance between two PSCs by computing the eigenvectors of the graph Laplacians for the two corresponding MICS. That is, following a technique developed by the neuroscientist Selen Atasoy and discussed by the Qualia Research Institute, we treat the MICS as a graph: a set of nodes connected by edges, where the nodes are concepts and the edges are overlaps between the past and future states of concepts. We then compute the Laplacian matrix of that graph as the difference between the adjacency and degree matrices. The eigenvectors of the Laplacian then correspond to the “graph harmonics.” These are the basic building blocks of oscillations in the MICS, in which certain concepts are activated and others are inactivated. We can find out whether two PSCs resonate by calculating whether the graph harmonics of their MICS have similar natural frequencies.